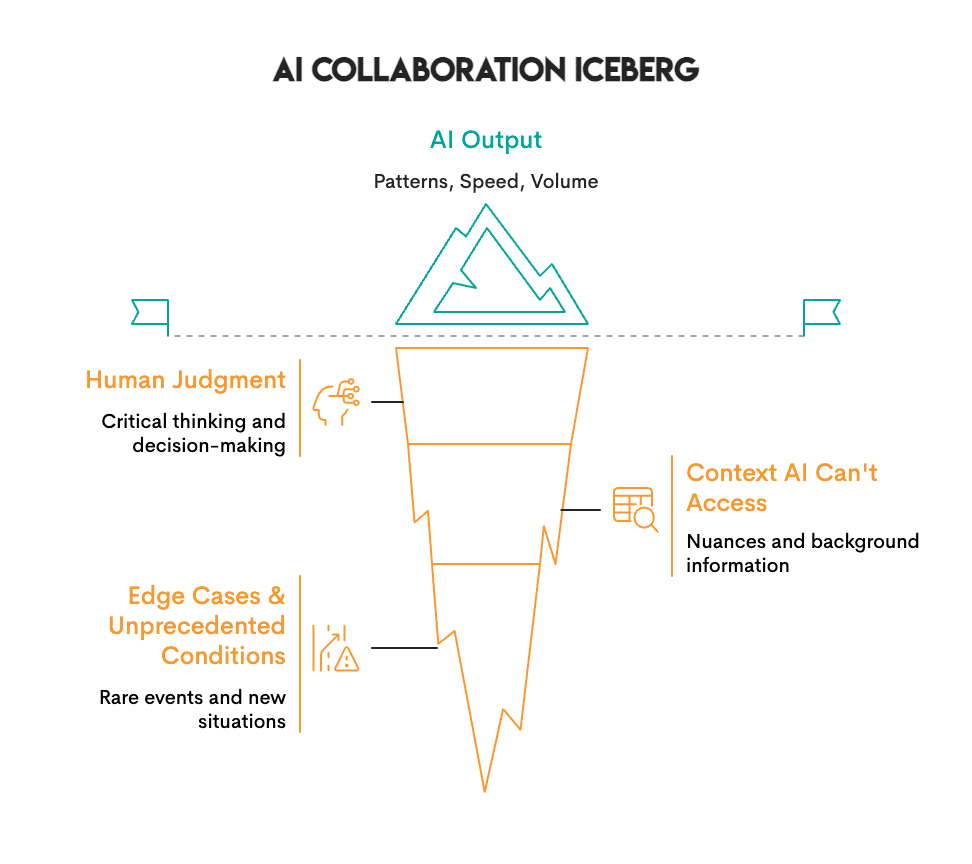

The global landscape of artificial intelligence is undergoing a fundamental paradigm shift, moving away from a traditional "command-and-response" model toward a sophisticated framework of human-AI collaboration. While early iterations of AI were viewed primarily as tools for automation—executing discrete tasks with minimal human intervention—the current vanguard of the technology focuses on "co-intelligence." In this emerging environment, AI systems do not merely follow instructions; they surface patterns, generate options, and provide transparent reasoning, while human operators provide the essential context, ethical oversight, and final decision-making authority. This shift is most visible in high-stakes sectors such as biotechnology, clinical medicine, and global finance, where the integration of machine speed and human judgment is yielding results that neither could achieve in isolation.

The Transformation of Scientific Research and Drug Discovery

The integration of collaborative AI has perhaps reached its highest level of maturity within the field of life sciences. Historically, the process of identifying viable drug compounds was a grueling endeavor characterized by high failure rates and immense temporal costs. Traditional drug development typically required four to five years just to reach the stage of identifying a promising lead compound. However, the introduction of generative AI platforms has fundamentally altered this chronology.

Insilico Medicine, a leader in end-to-end AI-driven drug discovery, demonstrated the power of this collaborative model by reducing the discovery timeline for a lead compound from the industry standard of 60 months to just 18 months—a 75% increase in efficiency. Their platform functions as a collaborator by screening tens of thousands of potential molecules and predicting their efficacy and safety profiles. Crucially, the system does not operate in a vacuum. Medicinal chemists review the AI-generated candidates, refine molecular structures based on nuanced chemical intuition, and design the physical experiments necessary for validation.

This synergy is mirrored in the realm of proteomics. DeepMind’s AlphaFold has famously predicted the structures of nearly all known proteins, a feat that would have taken traditional laboratories decades, if not centuries, to accomplish manually. Despite this breakthrough, the scientific community emphasizes that AlphaFold represents a collaborator rather than a replacement. Scientists must still interpret the biological significance of these structures and determine how they interact within the complex ecosystem of a living cell. The data suggests that while AI can predict "what" a structure looks like, human expertise is required to understand "why" it matters and "how" it can be leveraged for therapeutic breakthroughs.

Clinical Precision: The Hybrid Model in Healthcare

In clinical settings, particularly pathology and oncology, the collaborative model is actively saving lives by increasing diagnostic accuracy. A landmark study conducted by the Beth Israel Deaconess Medical Center highlighted the delta between independent human analysis and collaborative efforts. When pathologists reviewed tissue slides for cancer detection independently, they achieved a 96% accuracy rate. However, when utilizing PathAI—a platform that uses machine learning to flag suspicious cell clusters—the accuracy rate climbed to 99.5%.

The chronology of this advancement reveals a move toward "augmented intelligence." AI excels at maintaining consistent performance over thousands of images, identifying minute patterns that a fatigued human eye might overlook. Conversely, the pathologist provides the clinical context—considering the patient’s medical history, comorbid conditions, and the practical implications of a diagnosis. This "human-in-the-loop" (HITL) system ensures that the AI serves as a high-speed filter while the human remains the ultimate arbiter of medical truth.

Redefining Financial and Legal Operations

The corporate world is seeing a similar overhaul of legacy processes through collaborative AI. In the legal sector, JPMorgan Chase previously faced a staggering administrative burden, with legal teams spending approximately 360,000 hours annually on the manual review of commercial loan agreements. To address this, the firm developed COiN (Contract Intelligence).

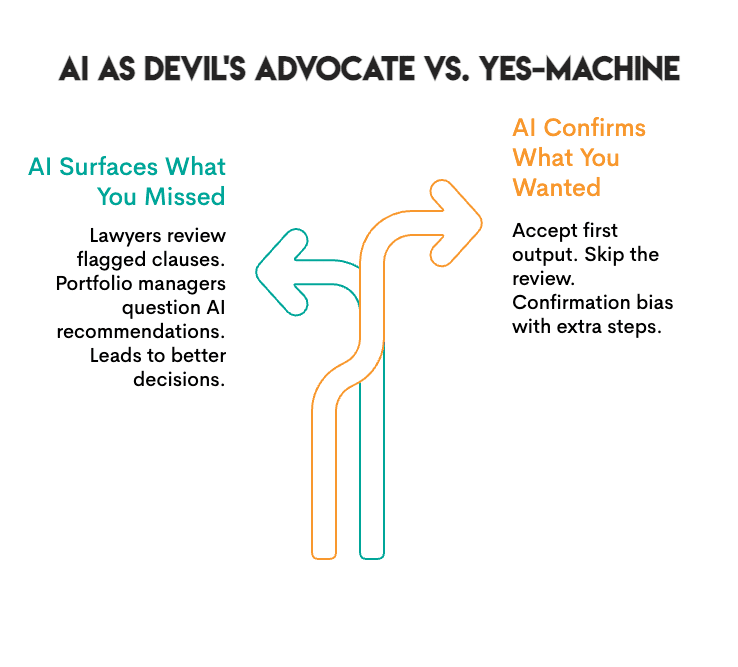

Using natural language processing (NLP), COiN reviews documents in seconds, extracting key data points and flagging questionable clauses. The implementation of this system did not lead to the mass termination of legal staff; instead, it shifted their focus. Attorneys now spend their time on high-level strategy and complex negotiations—tasks requiring emotional intelligence and ethical nuance—while the AI handles the repetitive data extraction. Reports indicate that this collaboration has reduced compliance errors by 80%, demonstrating that AI’s primary value lies in enhancing human accuracy rather than replacing human presence.

In the sphere of global asset management, BlackRock’s Aladdin (Asset, Liability, Debt, and Derivatives Investment Network) represents the pinnacle of collaborative risk management. Managing over $21.6 trillion in assets, the platform processes massive volumes of market data to identify potential risks. However, the final allocation of capital remains the responsibility of human portfolio managers. By combining Aladdin’s real-time analytics with human judgment, BlackRock has consistently outperformed both purely algorithmic and purely manual investment strategies. The success of this model has led to over 200 financial institutions licensing the platform, signaling a broader industry acceptance of the human-AI partnership.

The Mechanics of Effective Collaboration: Explainability and Transparency

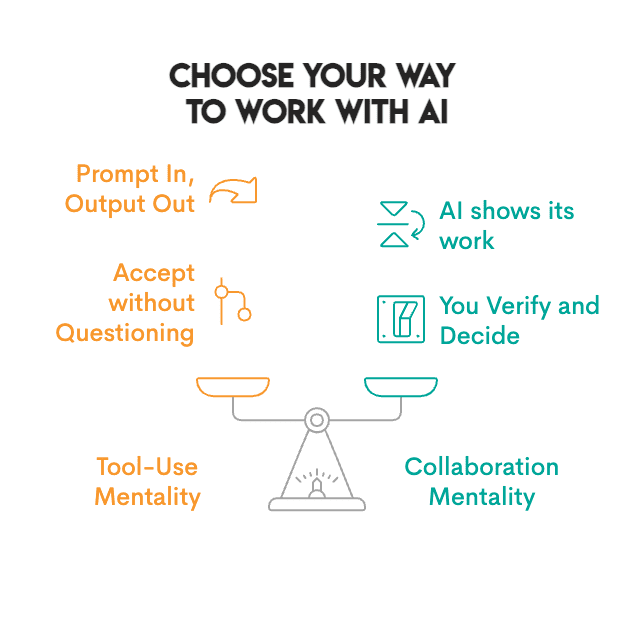

A critical component of successful human-AI teaming is the move away from "black box" systems. For a human to truly collaborate with a machine, the machine must be able to "show its work." This has given rise to the field of Explainable AI (XAI). Collaborative tools are now designed to provide citations, show the underlying code used for calculations, and offer a confidence score for their outputs.

Industry analysts suggest that the difference between a tool and a collaborator lies in the verification process. A tool provides an answer; a collaborator provides a rationale. When AI systems are transparent about their reasoning, humans can more easily identify "hallucinations" or logical errors, thereby maintaining the integrity of the workflow. This transparency is increasingly becoming a regulatory requirement, as evidenced by the European Union’s AI Act, which emphasizes the need for human oversight and technical robustness in high-risk AI applications.

Implications for the Global Labor Market and Professional Training

As collaborative AI becomes the standard, the skills required for the modern workforce are evolving. The "prompt in, response out" mentality is being replaced by a need for critical evaluation and "AI literacy." Career experts note that companies are already adjusting their recruitment processes to test for these skills.

In technical interviews for data science and engineering roles, recruiters are no longer just looking for the ability to generate code using AI. They are observing how candidates interact with the technology. A candidate who blindly accepts an AI-generated solution is often viewed as a liability. In contrast, candidates who question the AI’s logic, refine its outputs, and provide additional context are seen as high-value assets. This shift suggests that the most valuable professionals of the next decade will not be those who can code the fastest, but those who can most effectively manage and audit AI collaborators.

Broader Impact and Future Outlook

The broader implications of this shift are economic, ethical, and operational. Economically, the increase in productivity—such as the 75% reduction in drug discovery time—could lead to lower costs for essential services like healthcare. Operationally, it allows organizations to scale their expertise without a linear increase in headcount.

However, the transition is not without risks. There is a documented phenomenon known as "automation bias," where humans become overly reliant on machine outputs and stop exercising critical judgment. To mitigate this, organizations are implementing "adversarial" practices, such as periodically requiring teams to work without AI to maintain their baseline skills and ensure they remain capable of catching machine errors.

The future of work is not a binary choice between human and machine. It is a synthesis of the two. The data from JPMorgan, BlackRock, and Insilico Medicine confirms that the most successful organizations are those that treat AI as a junior partner—one that is capable of incredible speed and volume but requires the steady hand of human experience to navigate the complexities of the real world. As these systems continue to evolve, the focus will remain on building environments where transparency, verification, and mutual feedback form the bedrock of the human-machine relationship. This collaborative frontier represents the next great leap in industrial and scientific progress, promising a future where the constraints of human cognitive load are augmented by the limitless processing power of the digital age.