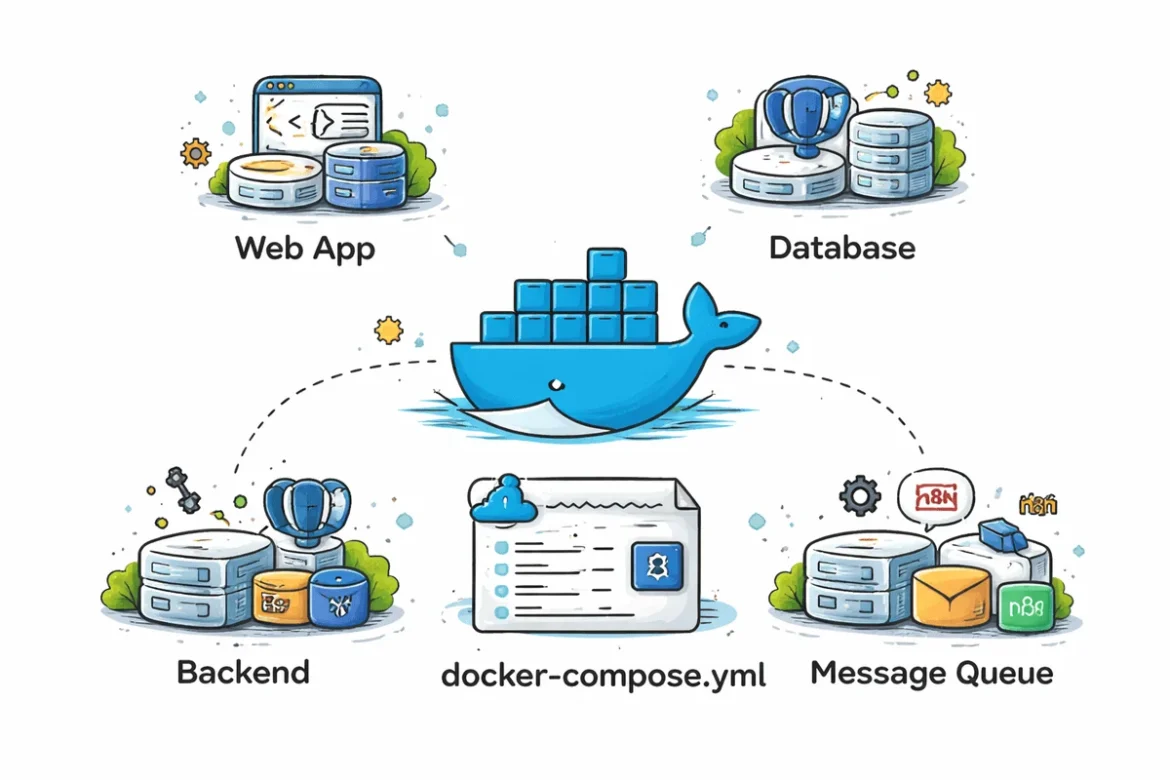

The evolution of software engineering has increasingly moved toward containerization as a standard for ensuring environment consistency and deployment reliability. Docker, since its inception in 2013, has fundamentally altered the landscape of DevOps by allowing developers to package applications and their dependencies into portable containers. However, as modern applications grew in complexity, often requiring multiple interconnected services such as databases, caches, and message brokers, the need for a simplified orchestration tool led to the rise of Docker Compose. By utilizing a single YAML file to define multi-container environments, Docker Compose has become an essential tool for local development and staging environments.

The Paradigm Shift in Development Environments

Historically, the "works on my machine" problem plagued software development teams. Differences in operating systems, library versions, and environmental configurations frequently led to bugs that only appeared in production. The introduction of Docker addressed this by isolating applications from the underlying infrastructure. Docker Compose further streamlined this by allowing developers to spin up entire stacks with a single command: docker-compose up.

According to the 2023 Stack Overflow Developer Survey, Docker remains the most used and desired tool among developers, with over 52% of professional developers utilizing it in their daily workflows. The growth of microservices architecture has only increased this dependency, as orchestrating five or ten separate services manually is no longer feasible.

A Chronology of Container Orchestration

The journey toward modern Docker Compose templates began in 2013 with the release of Docker as an open-source project. By 2014, Fig—a tool designed to manage isolated development environments—was acquired by Docker and rebranded as Docker Compose. Over the following decade, the tool evolved from a Python-based utility to a core feature of the Docker Desktop and CLI suite (Compose V2), now written in Go for better performance and integration.

In 2024, the focus of containerization has shifted from simple web hosting to complex data pipelines and local Artificial Intelligence (AI) deployment. The following seven templates represent the current state of the art in developer productivity, covering everything from traditional Content Management Systems (CMS) to cutting-edge AI automation.

1. WordPress Ecosystem: Streamlining CMS Development

WordPress remains a dominant force, powering approximately 43% of all websites globally. The template provided by the nezhar/wordpress-docker-compose repository offers a comprehensive environment that includes not just the WordPress core and a MySQL database, but also essential management tools like WP-CLI and phpMyAdmin.

For developers, this template eliminates the need for local Apache or Nginx installations. By containerizing the environment, developers can switch between different PHP versions or WordPress releases without affecting their host system. This is particularly critical for plugin and theme developers who must ensure compatibility across various environment configurations. The inclusion of WP-CLI allows for automated migrations and command-line management, mirroring professional production workflows.

2. Next.js and Modern Full-Stack Architectures

As the industry moves toward React-based frameworks, Next.js has emerged as a leader for server-side rendering and static site generation. The leerob/next-self-host template provides a blueprint for developers who wish to move away from managed hosting providers like Vercel and toward self-managed infrastructure.

This template is significant because it addresses production-level concerns. It integrates Next.js with a PostgreSQL database and uses Nginx as a reverse proxy. Furthermore, it provides configurations for Incremental Static Regeneration (ISR) and caching, which are often difficult to configure in a containerized environment. This setup serves as a bridge for developers transitioning from "hobbyist" deployments to robust, scalable enterprise architectures.

3. Data Management with PostgreSQL and pgAdmin

Database management is a cornerstone of backend development. The postgresql-pgadmin template from Docker’s official "Awesome Compose" collection provides a streamlined way to deploy a relational database alongside a graphical user interface (GUI).

In a microservices context, having a dedicated, reproducible database container is vital for integration testing. This template allows developers to define schemas, seed data, and perform complex queries through pgAdmin without installing heavy database engines directly on their machines. This isolation ensures that database configurations remain identical across the entire development team, reducing "configuration drift."

4. Django and the Python Backend Stack

For Python developers, the nickjj/docker-django-example repository represents one of the most complete boilerplate templates available. Django applications in production rarely run in isolation; they typically require a task queue, a cache, and a persistent database.

This template includes:

- PostgreSQL: For robust data storage.

- Redis: Serving as both a cache and a message broker.

- Celery: For handling asynchronous background tasks.

- Environment-based configuration: To manage secrets and settings securely.

By providing a pre-configured Celery and Redis setup, this template allows developers to focus on building features like email notifications or image processing without the overhead of manually wiring together these disparate services.

5. Event-Driven Systems with Apache Kafka

As applications move toward real-time data processing, Apache Kafka has become the industry standard for event streaming. However, Kafka is notoriously difficult to set up due to its dependency on Zookeeper and various management interfaces.

The conduktor/kafka-stack-docker-compose template simplifies this by offering various "flavors" of the Kafka stack. It includes Schema Registry for data consistency, Kafka Connect for integrating with other data sources, and the Conduktor Platform for visual monitoring. For developers working on financial systems or real-time analytics, this template provides a local "sandbox" that mimics the high-throughput environments found in large-scale enterprises.

6. Self-Hosted AI and Automation with n8n

The rise of low-code automation and generative AI has led to a demand for self-hosted integration platforms. The n8n-io/self-hosted-ai-starter-kit is a specialized Docker Compose stack that combines the n8n automation tool with AI-specific components like Ollama (for local LLMs) and Qdrant (a vector database).

This template is a response to the growing concern over data privacy in AI. By running these tools locally, companies can build AI agents and automated workflows that process sensitive data without sending it to third-party cloud providers. It represents a significant shift in the DevOps landscape, where "AI-Ops" is becoming a standard requirement for modern software stacks.

7. Local LLM Management: Ollama and Open WebUI

For developers focused purely on Large Language Models (LLMs), the ollama-litellm-openwebui template provides a user-friendly interface for interacting with local models like Llama 3 or Mistral. It uses LiteLLM as a proxy to provide an OpenAI-compatible API, allowing developers to swap out local models for cloud-based ones with minimal code changes.

This setup is particularly useful for testing how applications react to different model outputs. With the Open WebUI component, developers get a ChatGPT-like interface running entirely on their own hardware. This democratization of AI tools is a direct result of containerization making complex software installations accessible to the average developer.

Industry Implications and Expert Analysis

The proliferation of these templates indicates a broader trend toward "Infrastructure as Code" (IaC) at the local level. Industry analysts suggest that the use of standardized Docker Compose templates can reduce "onboarding time" for new developers by up to 70%. Instead of spending days configuring a local machine, a new hire can clone a repository and be productive within minutes.

Furthermore, the shift toward local AI stacks (Templates 6 and 7) suggests that the next frontier for Docker is the optimization of GPU resources within containers. As more developers run LLMs locally, the ability for Docker Compose to manage hardware acceleration (via NVIDIA Container Toolkit) will become a primary focus for the community.

Supporting Data: The Impact of Containerization

Recent data from Gartner predicts that by 2027, more than 90% of global organizations will be running containerized applications in production, up from less than 50% in 2020. This growth is mirrored in the development phase, where Docker Compose serves as the primary gateway to Kubernetes.

| Metric | Impact of Docker Compose Usage |

|---|---|

| Onboarding Time | Reduced from 2-3 days to <1 hour |

| Environment Parity | 95% reduction in "works on my machine" bugs |

| Resource Efficiency | Up to 40% less overhead compared to Virtual Machines |

| Deployment Frequency | 3x increase in teams using containerized CI/CD |

Conclusion: Building on a Strong Foundation

The seven templates discussed—ranging from WordPress and Next.js to Kafka and AI-driven automation—provide more than just a shortcut; they offer a standardized methodology for modern software development. By leveraging these community-vetted configurations, developers can avoid the pitfalls of manual setup and focus on the core logic of their applications.

As the software landscape continues to evolve toward decentralized AI and complex event-driven architectures, the role of Docker Compose as an orchestrator for local development will only solidify. These templates serve as a critical foundation for any developer looking to build robust, scalable, and portable software in the modern era. Using these proven environments allows for a "fail-fast" approach to experimentation, where complex systems can be spun up, tested, and torn down with unparalleled ease.