The toy manufacturing giant Mattel recently fundamentally altered its design process for the iconic Hot Wheels brand by turning to an unexpected collaborator: artificial intelligence. By leveraging DALL-E 2, a generative AI system developed by OpenAI, Mattel’s design team has begun transforming simple text descriptions into complex visual concepts, marking a significant shift in how consumer products move from ideation to production. This integration serves as the cornerstone of a broader announcement made at Microsoft Ignite, where the technology giant confirmed that DALL-E 2 is officially joining the Azure OpenAI Service. This move provides select enterprise customers with access to high-performance cloud infrastructure capable of generating custom imagery through natural language prompts, backed by the security and compliance frameworks of the Microsoft Azure ecosystem.

The Convergence of Generative AI and Industrial Design

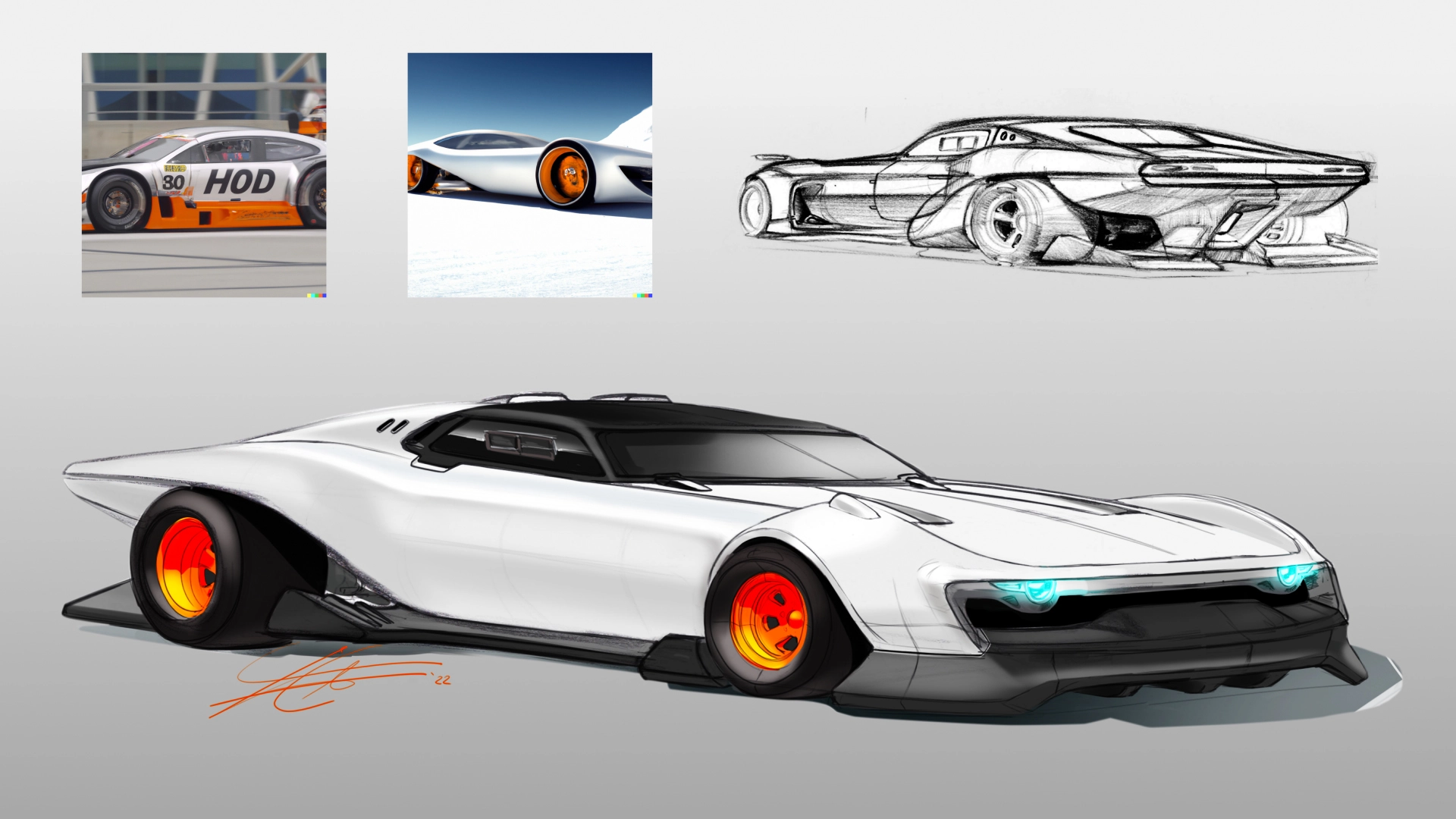

At Mattel’s Future Lab in El Segundo, California, the integration of DALL-E 2 has allowed designers to bypass traditional sketching bottlenecks. A designer can input a prompt as simple as "a scale model of a classic car" and receive a high-fidelity image of a vintage vehicle, complete with period-accurate details like whitewall tires. The iterative nature of the tool allows for rapid modification; by erasing a portion of the generated image and typing "make it a convertible" or "render it in hot pink," the AI updates the visual in seconds.

Carrie Buse, Director of Product Design at Mattel Future Lab, emphasized that the technology acts as a catalyst for human creativity rather than a replacement for it. According to Buse, the primary value lies in the sheer volume of ideas the system can produce. While quality remains the ultimate goal, the ability to generate dozens of iterations in the time it previously took to create one helps designers identify unique aesthetic directions they might not have otherwise considered. This "quantity leads to quality" approach is becoming a hallmark of the new generative design era.

Microsoft Ignite and the Strategic Rollout of Azure OpenAI Service

The announcement at Microsoft Ignite, an annual conference for developers and IT professionals, signals a major step in Microsoft’s strategy to "productize" large-scale AI models. DALL-E 2 is now available via invitation to Azure OpenAI Service customers, joining other powerful models such as GPT-3 for natural language processing and Codex for automated code generation.

The availability of DALL-E 2 through Azure is distinct from the public version of the tool. Enterprise customers require specific guarantees regarding data privacy, uptime, and ethical guardrails. By hosting DALL-E 2 on Azure, Microsoft provides the necessary certifications and "Responsible AI" filters that allow large corporations to use generative technology without violating internal compliance or external regulatory standards. This integration is also extending to Microsoft’s consumer-facing applications, including the newly launched Microsoft Designer app and the Image Creator feature within the Bing search engine.

A Chronology of the Microsoft-OpenAI Partnership

The integration of DALL-E 2 is the latest milestone in a multi-year strategic partnership between Microsoft and OpenAI. To understand the current landscape, it is essential to look at the timeline of this collaboration:

- July 2019: Microsoft announced a $1 billion investment in OpenAI, becoming the startup’s exclusive cloud provider. The goal was to build a computational platform of unprecedented scale.

- September 2020: Microsoft announced it had reached an agreement to license GPT-3, the world’s most advanced language model at the time, allowing for deeper integration into Microsoft products.

- May 2021: During the Build conference, Microsoft showcased the first commercial integration of GPT-3 into Microsoft Power Apps, enabling "low-code" development through natural language.

- October 2021: The Azure OpenAI Service was first introduced in a limited preview, offering enterprise-grade access to OpenAI’s models.

- October 2022: At Microsoft Ignite, the company officially added DALL-E 2 to the Azure OpenAI suite and announced the integration of generative AI into the Microsoft 365 ecosystem.

This progression illustrates a shift from research-oriented experimentation to a "nonlinear breakthrough" phase where AI models are mature enough for mission-critical business applications.

Technical Infrastructure: The Role of Azure Supercomputing

The performance of DALL-E 2 is inextricably linked to the hardware it runs on. Microsoft built a specialized AI supercomputer in the Azure cloud exclusively for training OpenAI’s models. This infrastructure utilizes thousands of NVIDIA GPUs linked by high-bandwidth networking, providing the massive compute power required to process the trillions of data points necessary for text-to-image synthesis.

Eric Boyd, Microsoft Corporate Vice President for AI Platform, noted that the industry has crossed a "threshold of quality." Previously, AI models were proof-of-concept curiosities; now, they possess the fidelity required for professional design, marketing, and software engineering. The Azure platform not only hosted the training of these models but now serves as the delivery mechanism that allows these tools to generate suggestions for text, code, or images in real-time.

Automating the Tedious: Power Automate and Natural Language

Beyond visual art, Microsoft is infusing AI into the "monotony" of office work. Charles Lamanna, Corporate Vice President of Business Applications and Platform, detailed how natural language models are being used to automate complex workflows. Through Power Automate, users can now describe a task in plain English—such as "Whenever I get an email from my boss, send a text to my phone and create a task in Outlook"—and the AI will automatically build the underlying software architecture to execute that command.

This democratization of software development means that employees without formal coding training can create bespoke tools to manage their specific workloads. Lamanna highlighted the potential for AI to act as a "copilot" for professionals. For example, a lawyer could use AI to monitor a SharePoint site for new contracts, extract key metadata (parties involved, industry sector, financial terms), and automatically email a summary to the relevant partners. This reduces hours of skimming and manual data entry to a few seconds of automated processing.

Content AI and the Transformation of Microsoft 365

The scale of digital content creation has reached a tipping point. Microsoft reports that its customers add approximately 1.6 billion pieces of content—ranging from emails and Word documents to Teams meeting transcripts—to Microsoft 365 every single day. To manage this deluge, Microsoft introduced "Microsoft Syntex," a Content AI offering that uses Azure Cognitive Services to read, tag, and index both digital and paper documents.

Jeff Teper, Microsoft President of Collaborative Apps and Platform, pointed out that Syntex allows organizations to perform "structured activities" like contract approvals and invoice management at a scale humans cannot match. For instance, TaylorMade Golf Company utilized Syntex to organize thousands of intellectual property and patent filings. Previously, attorneys spent hours manually filing documents; with AI, the system automatically classifies and filters documents, making them searchable via metadata rather than a traditional, cumbersome folder system.

Personalized Media and the Future of Consumer Engagement

The implications of DALL-E 2 extend deeply into the media and entertainment sectors. RTL Deutschland, Germany’s largest private cross-media company, is currently testing DALL-E 2 to solve the "recommendation gap." Marc Egger, Senior VP of Data Products and Technology at RTL, explained that while algorithms are good at recommending content, users often decide what to watch based on visual cues.

RTL is exploring the use of DALL-E 2 to generate personalized artwork for its streaming service, RTL+. If a user is a fan of sports, the thumbnail for a romantic comedy might feature the lead character in a stadium; if the user prefers romance, the same movie might be represented by a scene of the couple in a picturesque setting. Furthermore, RTL is investigating the generation of unique imagery for podcast episodes and audiobook chapters, providing a visual accompaniment to traditionally audio-only formats. This level of personalization would be impossible with a human workforce alone, as no design team could produce millions of unique images for millions of individual users.

Ethics and Responsibility: Building AI Guardrails

As generative AI becomes more prevalent, the potential for misuse—such as the creation of "deepfakes" or inappropriate content—remains a significant concern. Sarah Bird, Microsoft’s Principal Group Project Manager for Azure AI, addressed the company’s commitment to "Responsible AI."

To mitigate risks, Microsoft and OpenAI have implemented several layers of protection:

- Data Scrubbing: Explicitly violent or sexual content was removed from the initial training datasets.

- Prompt Filtering: Azure AI employs filters that automatically reject user prompts that violate content policies.

- Output Monitoring: Secondary models scan the generated images to ensure they do not contain gore or adult content before they are shown to the user.

- Public Figure Protection: Specific techniques have been integrated to prevent the system from generating recognizable images of celebrities or public figures.

Bird emphasized that these systems are also designed to combat the inherent biases of the internet. Because AI models are trained on existing web data, they can inadvertently replicate social biases. Microsoft is focusing on "interface design" to encourage users to provide more descriptive prompts, helping the AI generate diverse and representative imagery rather than falling back on "average" or biased internet representations.

Analysis: The Shift from Research to Utility

The rollout of DALL-E 2 across the Azure ecosystem represents a fundamental shift in the technology sector. For the past decade, AI was largely a field of academic research and specialized niche applications. We are now entering the era of "AI Productization," where the focus has moved from proving what AI can do to mapping its capabilities onto actual business processes.

The economic impact of this shift is likely to be profound. By automating the "tedious" aspects of creativity and administration, companies like Mattel, TaylorMade, and RTL are reclaiming thousands of hours of human productivity. As Microsoft continues to integrate these models into the tools used by over a billion people daily, the line between human effort and machine assistance will continue to blur, ushering in a new standard for how work is conceived and executed in the digital age.