The landscape of digital information is undergoing a fundamental transformation, shifting the role of data compression from a niche multimedia tool into a foundational pillar of the entire global infrastructure. For decades, the primary goal of compression was to make audio and video files small enough to stream or store without losing perceptible quality for human consumers. Today, that paradigm has shifted toward "all-data" compression, encompassing diverse and complex datasets including genomic sequences, point clouds, haptics, 3D scenes, neural network weights, and machine-specific features. As the world generates an unprecedented volume of bits across sectors ranging from autonomous transportation to personalized medicine, compression has evolved into the invisible operating system of the digital age.

The scale of this challenge is best illustrated by the sheer volume of data being produced. In 2020, the global datasphere—comprising all data created, captured, copied, and consumed—was estimated at approximately 59 zettabytes. Projections from industry analysts suggest this figure will swell to 175 zettabytes by 2025. To put this in perspective, a single zettabyte is equivalent to a trillion gigabytes. While computing power and storage capacity have increased exponentially since the invention of the transistor in 1947, the rate of data generation is currently outstripping the physical capacity to move, store, and process it efficiently. This bottleneck has made compression a strategic necessity rather than a technical luxury.

The Institutional Backbone: SC 29, JPEG, and MPEG

At the center of this technological evolution is the ISO/IEC JTC 1/SC 29 subcommittee. While not a household name, SC 29 is the governing body that coordinates the Joint Photographic Experts Group (JPEG) and the Moving Picture Experts Group (MPEG). For over three decades, these groups have defined the standards that allow digital media to function across different devices and platforms. Historically, their focus was "media for humans"—content intended for eyes and ears. However, their current roadmap reflects a broadening scope: "data for humans and machines."

The work of SC 29 now spans the entire digital value chain, from content creation and processing to distribution and consumption. By standardizing how data is represented and reduced, these groups ensure interoperability between smartphones, medical imaging devices, autonomous vehicle sensors, and high-performance data centers. The transition is marked by a move away from handcrafted mathematical transforms toward AI-native architectures and biological storage solutions.

The Evolution of JPEG: From Pixels to Latent Tensors and DNA

The ubiquitous .jpg format has served as the web’s visual standard for over 30 years, but the JPEG committee has significantly diversified its portfolio to meet the demands of the 2020s.

JPEG AI and the Shift to Latent Spaces

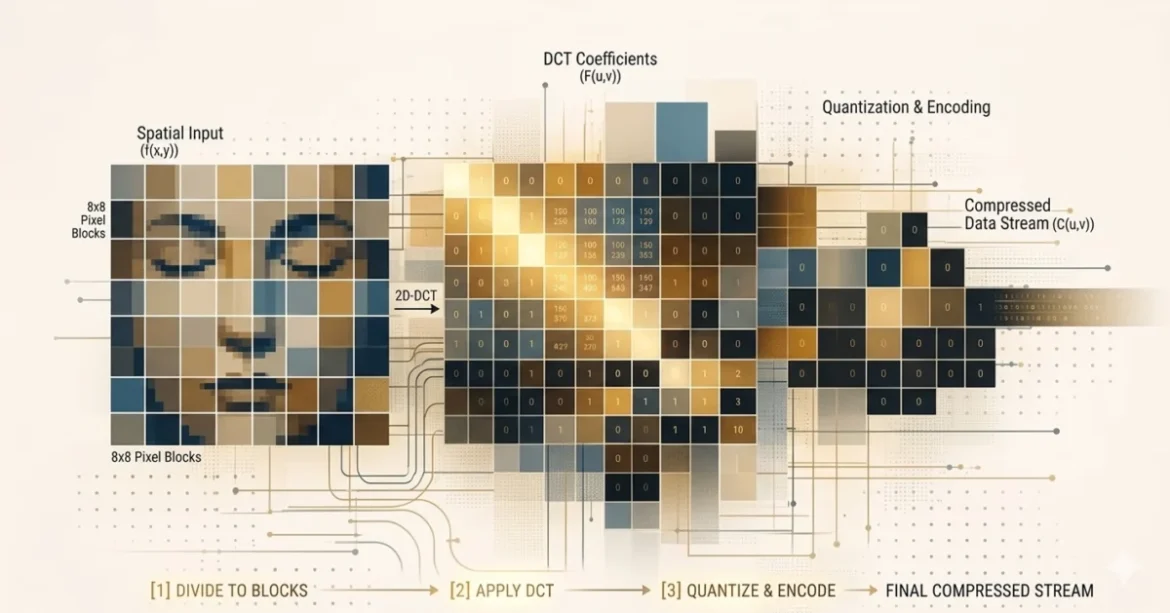

Traditional image compression works by discarding visual information that the human eye cannot easily see. JPEG AI, the first learning-based image coding standard, takes a different approach. Instead of operating on pixels, it transforms images into "latent tensors"—mathematical representations in an abstract space. The decoder can reconstruct the image for human viewing or, crucially, allow machine learning algorithms to perform computer vision tasks directly on the compressed data without full decoding. This "dual-use" capability is essential for smart city applications where a single camera stream must be both archived for security personnel and analyzed by traffic-flow algorithms.

JPEG Trust and Authenticity

As generative AI floods the internet with synthetic media, the concept of "JPEG Trust" has emerged as a vital tool for digital sovereignty. Built upon the Coalition for Content Provenance and Authenticity (C2PA) framework, JPEG Trust provides a standardized method for embedding metadata that tracks the origin, ownership, and modification history of an image. This acts as a digital signature, helping users and platforms distinguish between a captured photograph and a deepfake—a requirement increasingly mandated by international regulations such as the European Union’s AI Act.

JPEG DNA: Biological Storage Solutions

Perhaps the most forward-looking initiative is JPEG DNA. With conventional magnetic and optical storage media lasting only a few decades before degradation or obsolescence, researchers are turning to nature’s original data store. DNA offers an information density and longevity that far exceed any silicon-based technology, capable of preserving data for centuries. JPEG DNA aims to standardize how digital images are encoded into biochemical sequences, addressing the high error rates inherent in synthesis and sequencing to create a truly "future-proof" archive.

MPEG and the Future of Video: H.267 and Energy Efficiency

The Moving Picture Experts Group (MPEG) has been the architect of the modern media world through standards like MP3, AVC (H.264), and HEVC (H.265). Its latest standard, Versatile Video Coding (VVC or H.266), was finalized in 2020, but the group is already looking toward the next decade.

The Path to H.267

The current Enhanced Compression Model (ECM) project is laying the groundwork for what will likely become the H.267 codec. Targeted for finalization around 2028, this next-generation standard aims for a 40% reduction in bitrate compared to VVC. This is critical for the deployment of 8K resolution, high-frame-rate content (up to 240 fps), and immersive VR/AR experiences. However, industry cycles are accelerating; while it took a decade to move from AVC to HEVC, the gap between VVC and H.267 is expected to be shorter as competitors like AOMedia (AV1/AV2) and regional standards like China’s AVS3 push the boundaries of efficiency.

Green Metadata and Sustainability

As codecs become more complex, the energy required to encode and decode video has become a significant environmental concern. ISO/IEC 23001-11, also known as Green Metadata, addresses this by allowing content to carry instructions that help devices reduce power consumption. For example, a display could adapt its backlight levels based on the specific characteristics of the metadata, optimizing energy usage without sacrificing viewer experience. In an era where "joules per bit" is as important as "bits per pixel," energy efficiency has become a formal selection criterion for new standards.

Video and Feature Coding for Machines (VCM and FCM)

A significant portion of modern video traffic is never seen by a human. Surveillance cameras, industrial robots, and autonomous vehicles generate vast streams of data intended for machine consumption. Traditional codecs are inefficient for these use cases because they prioritize visual aesthetics over semantic information.

Collaborative Intelligence

MPEG-AI is developing Video Coding for Machines (VCM) and Feature Coding for Machines (FCM) to address this. VCM optimizes for tasks like object detection and segmentation, often dropping frames or reducing resolution in ways that would be unwatchable for humans but are perfect for AI. FCM goes a step further by using "collaborative intelligence." In this model, an edge device (like a drone) performs the first few layers of a neural network calculation and then transmits the intermediate "features" to a powerful cloud server. This can result in a 97% reduction in bandwidth while preserving privacy, as the transmitted data contains semantic meaning but no recognizable human faces or identities.

Immersive Content: Gaussian Splatting and 3D Scenes

The rise of the metaverse and spatial computing has necessitated new ways to compress 3D environments. Moving beyond traditional meshes, MPEG is exploring Gaussian Splat Coding (GSC). Gaussian Splatting represents a scene as millions of "fuzzy" 3D ellipsoids, allowing for photorealistic, real-time rendering on consumer hardware. Because these "splats" consist of massive collections of 3D points with attributes like opacity and color, standardizing their compression is essential for making immersive web experiences and interactive games viable over standard internet connections.

Furthermore, the G-PCC (Geometry-based Point Cloud Compression) family of standards is expanding to support spinning LiDAR sensors used in robotics. These codecs allow for low-latency processing of the dense, dynamic clouds of points that autonomous systems use to "see" their surroundings in three dimensions.

Audio Personalization and Accessibility

While video often dominates the conversation, audio compression is also pivoting toward object-based models. MPEG-H Audio allows for unprecedented personalization. Instead of a fixed stereo or surround mix, broadcasters can send audio "objects"—such as the commentator’s voice, the stadium crowd, and the engine noise of a race car—as separate streams.

A key innovation in this space is MPEG-H Dialog+, which allows users to selectively enhance speech clarity. This is a transformative feature for individuals with hearing impairments or those watching content in noisy environments, as it allows the dialogue to be boosted without distorting the background music or sound effects.

Analysis of Implications: The Invisible Foundation

The transition of compression from a media tool to a foundational data technology has profound implications for the global economy and environment.

- Economic Scalability: Without the 97% reduction in data size promised by Feature Coding for Machines, the infrastructure costs of the "AI of Everything" would be prohibitive. Compression is the primary factor that will determine which AI services can be deployed at scale on mobile networks.

- Environmental Impact: By integrating energy-aware metadata and optimizing for "joules per bit," compression standards are becoming a front-line tool in the tech industry’s fight against its massive carbon footprint.

- Information Sovereignty: In an era of deepfakes, standards like JPEG Trust move the responsibility of authenticity from the platform to the data itself. This shift is essential for maintaining public trust in digital information.

- Data Longevity: The exploration of biological storage (JPEG DNA) acknowledges that our current digital civilization is surprisingly fragile. Standardizing DNA-based storage is an attempt to ensure that the vast wealth of human knowledge being generated today remains readable centuries from now.

In conclusion, compression is no longer just about making things smaller; it is about making things possible. As the "operating system" for the global datasphere, these standards define the limits of what we can transmit, how efficiently we can learn, and ultimately, what we can believe in an increasingly digital world. The work of groups like JPEG and MPEG ensures that even as the volume of data reaches unfathomable scales, it remains a tool for human progress rather than an unmanageable burden.